How it Works#

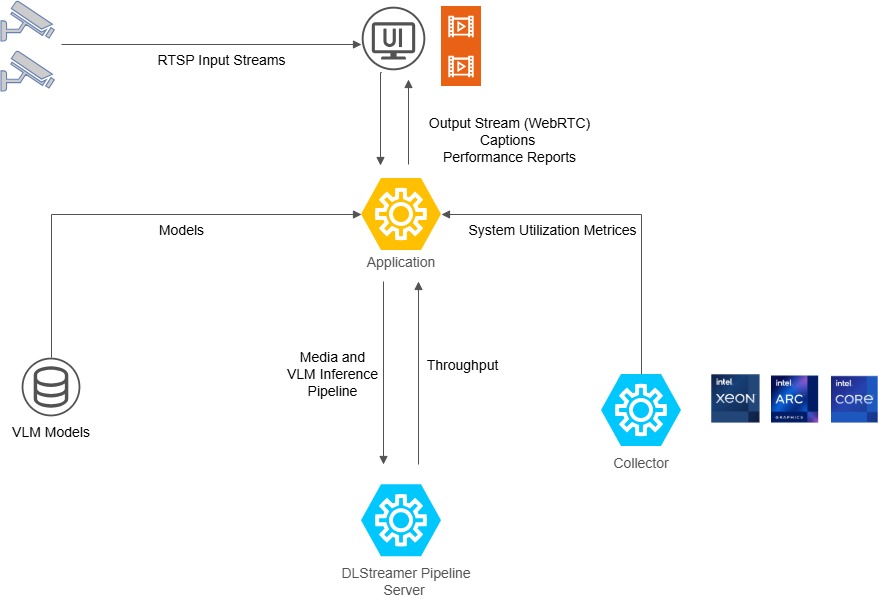

The stack ingests an RTSP stream, runs a DLStreamer pipeline that samples frames for VLM inference, and sends results to the dashboard.

Data Flow#

flowchart LR

subgraph FLOW["Data Flow"]

RTSP["RTSP Source"] --> DPS["DL Streamer Pipeline Server"]

DPS -->|"1fps AI branch\n(GStreamer gvagenai)"| MQTT["MQTT Broker"]

DPS -->|"30fps preview"| MTX["mediamtx (WebRTC)"]

MTX --> DASH["Dashboard"]

DASH --> METRICS["Dashboard collects metrics\n(CPU, GPU, RAM)"]

end

System Components#

dlstreamer-pipeline-server: Intel DLStreamer Pipeline Server processing RTSP sources with GStreamer pipelines and

gvagenaifor VLM inferencemediamtx: WebRTC/WHIP signaling server for video streaming

coturn: TURN server for NAT traversal in WebRTC connections

app: Python FastAPI backend serving REST APIs, SSE metadata streams, and WebSocket metrics

collector: Intel VIP-PET system metrics collector (CPU, GPU, memory, power)