FAQ#

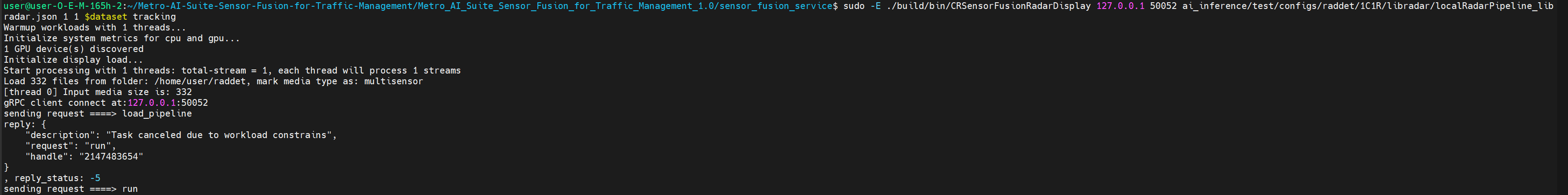

If you run different pipelines in a short period of time, you may encounter the following error:

Figure 1: Workload constraints error This is because the maxConcurrentWorkload limitation in

AiInference.configfile. If the workloads hit the maximum, task will be canceled due to workload constrains. To solve this problem, you can kill the service with the commands below, and re-execute the command.sudo pkill Hce

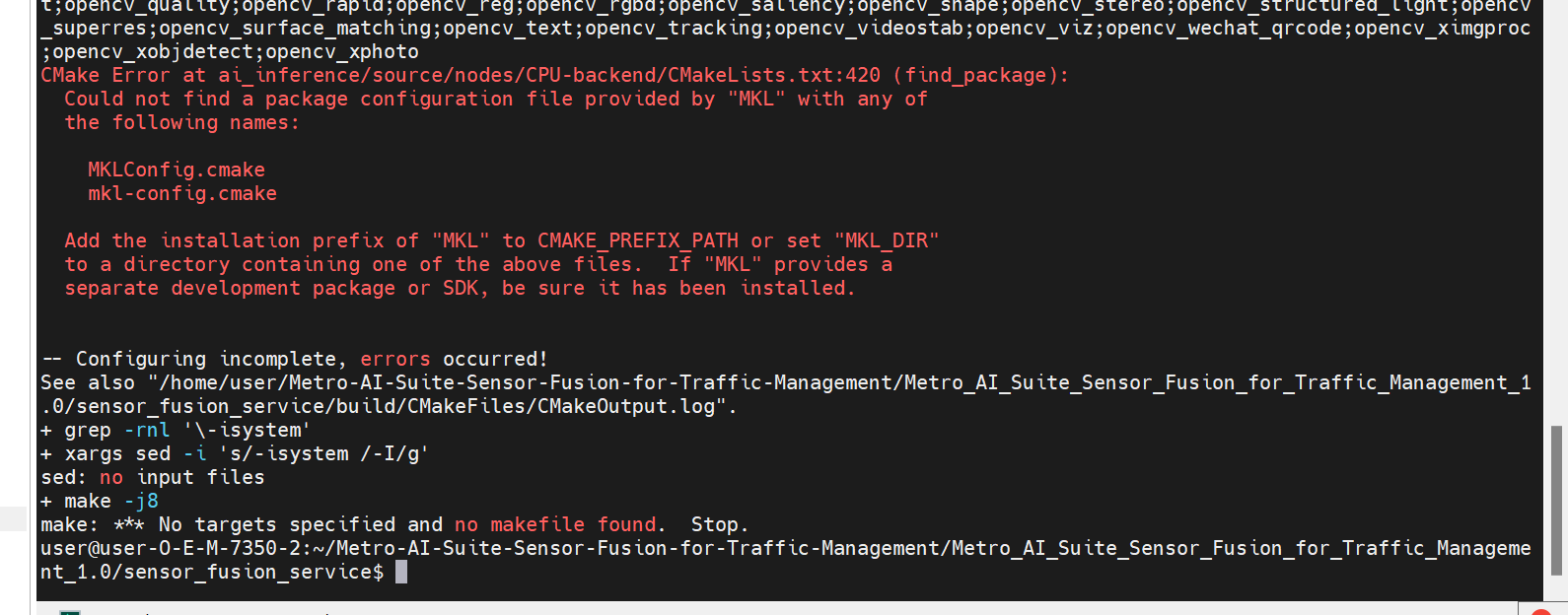

If you encounter the following error during code compilation, it is because mkl is not installed successfully:

Figure 2: Build failed due to mkl error Run

ls /opt/intelto check if there is a OneAPI directory in the output. If not, it means that mkl was not installed successfully. You need to reinstall mkl by following the steps below:curl -k -o GPG-PUB-KEY-INTEL-SW-PRODUCTS.PUB https://apt.repos.intel.com/intel-gpg-keys/GPG-PUB-KEY-INTEL-SW-PRODUCTS.PUB -L sudo -E apt-key add GPG-PUB-KEY-INTEL-SW-PRODUCTS.PUB && sudo rm GPG-PUB-KEY-INTEL-SW-PRODUCTS.PUB echo "deb https://apt.repos.intel.com/oneapi all main" | sudo tee /etc/apt/sources.list.d/oneAPI.list sudo -E apt-get update -y sudo -E apt-get install -y intel-oneapi-mkl-devel lsb-release

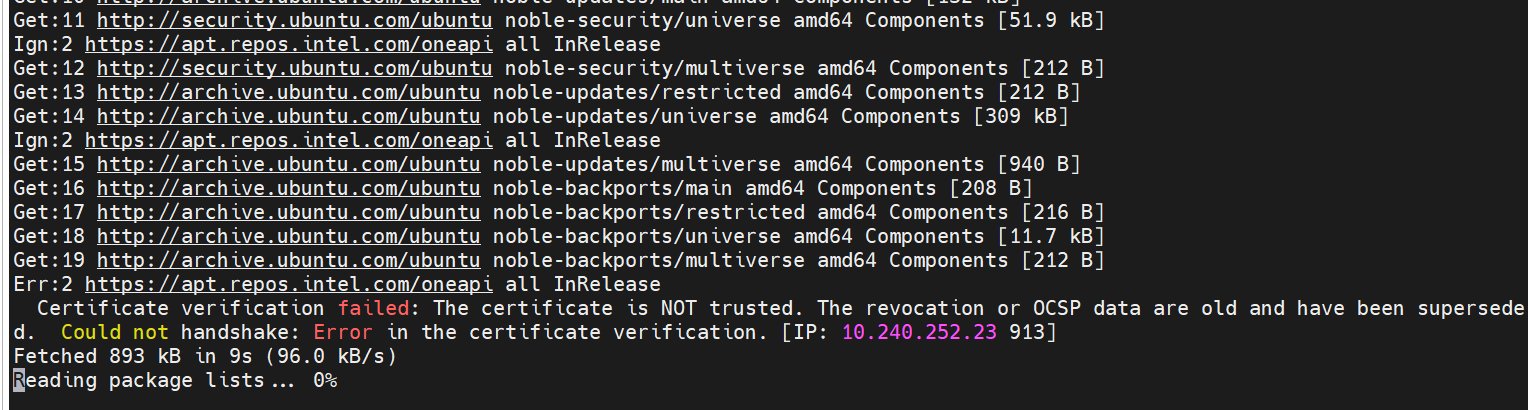

If the system time is incorrect, you may encounter the following errors during installation:

Figure 3: System Time Error You need to set the correct system time, for example:

sudo timedatectl set-ntp true

Then re-run the above installation command.

sudo apt-get remove --purge intel-oneapi-mkl-devel sudo apt-get autoremove -y sudo apt-get install -y intel-oneapi-mkl-devel

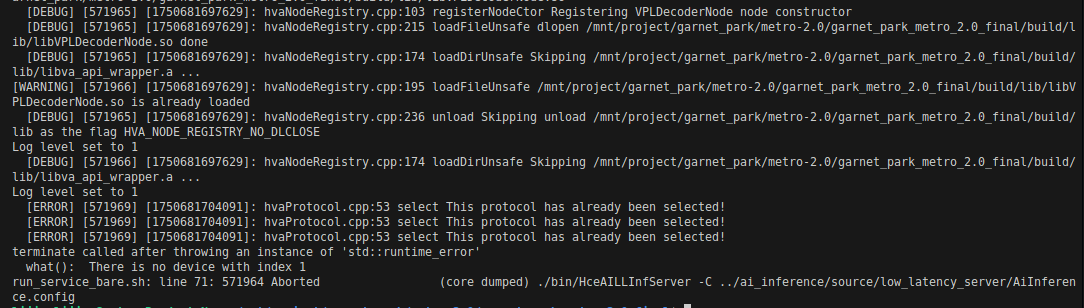

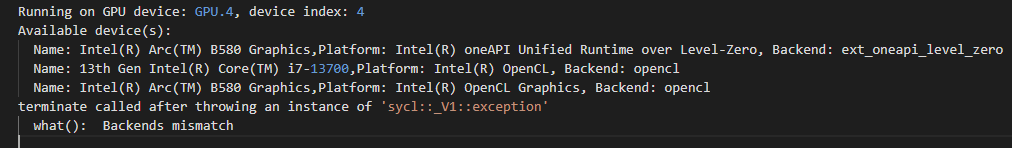

If you encounter the following errors during running on B580 platform:

Figure 4: Device Index Error It may be because the iGPU is not enabled, only the B580 is enabled.

You can use

lspci | grep VGAto view the number of GPU devices on the machine.The solution is either enable iGPU in BIOS, or change the config of

Device=(STRING)GPU.1toDevice=(STRING)GPUinVPLDecoderNodeandVPLDecoderNodein pipeline config file, for example:ai_inference/test/configs/kitti/6C1L/localFusionPipeline.json.If you encounter the following backends mismatch errors during running pipeline:

Figure 5: Backends Mismatch Error This is because the wrong or non-existent device is selected. We need to select the

dGPU+openclBackend. As shown in the figure, it should be the second device (numbered starting from 0), that is,GPU.2.The solution is change config

Device=(STRING)GPU.4toDevice=(STRING)GPU.2inLidarSignalProcessingNodein pipeline config file, for example:ai_inference/test/configs/kitti/6C1L/localFusionPipeline.json.