Model Preparation & Conversion#

AI models are often portable and may be easily adapted to different frameworks. With OpenVINO, the main Open Edge Platform solution for inference and preparation of pre-trained models, you can easily use PyTorch, TensorFlow, TensorFlow Lite, ONNX, PaddlePaddle, and JAX/Flax (experimental). For best compatibility and performance, it also offers its own format: OpenVINO IR.

This means that applications using TensorRT, Triton, or DeepStream can be often ported to Intel equivalents with minimal effort, opening new possibilities for you to benefit from a software ecosystem optimized for Intel hardware, including CPUs, GPUs, VPUs, and FPGAs.

To learn more about supported model formats and conversion options, see the OpenVINO guides:

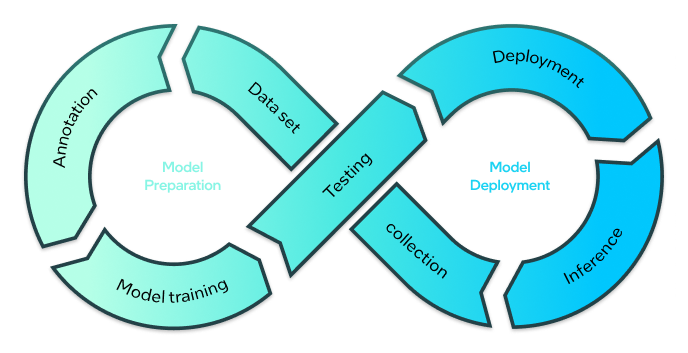

You can also finetune and train vision-based models to meet your specific needs using Geti. Offering an intuitive User Interface, it enables you to easily teach the model to recognize your items or even create a new model from scratch. Simply add image or video data and annotate it. Geti will export a new version, ready for optimization and deployment. It is equipped with state-of-the-art technology such as active learning, task chaining, and smart annotations, to make the labor-intensive tasks, even easier and faster.

For more information, see Geti Documentation.

Conversion from TAO (.tlt)#

Conversion from TAO is not supported by OpenVINO directly. To use TAO models, you need to export them to ONNX. To see how to migrate from TAO, see the article on TAO migration